Data Distillation

Learn how to transfer knowledge from API-based teacher models to a smaller student model, achieving similar performance at lower inference cost.

Purpose and Overview

Data Distillation trains a smaller "student" model to replicate the behavior of a larger "teacher" model that is accessed through API. Unlike knowledge distillation, the student can only access the teacher's outputs (correct answers), not its internal states or reasoning process.

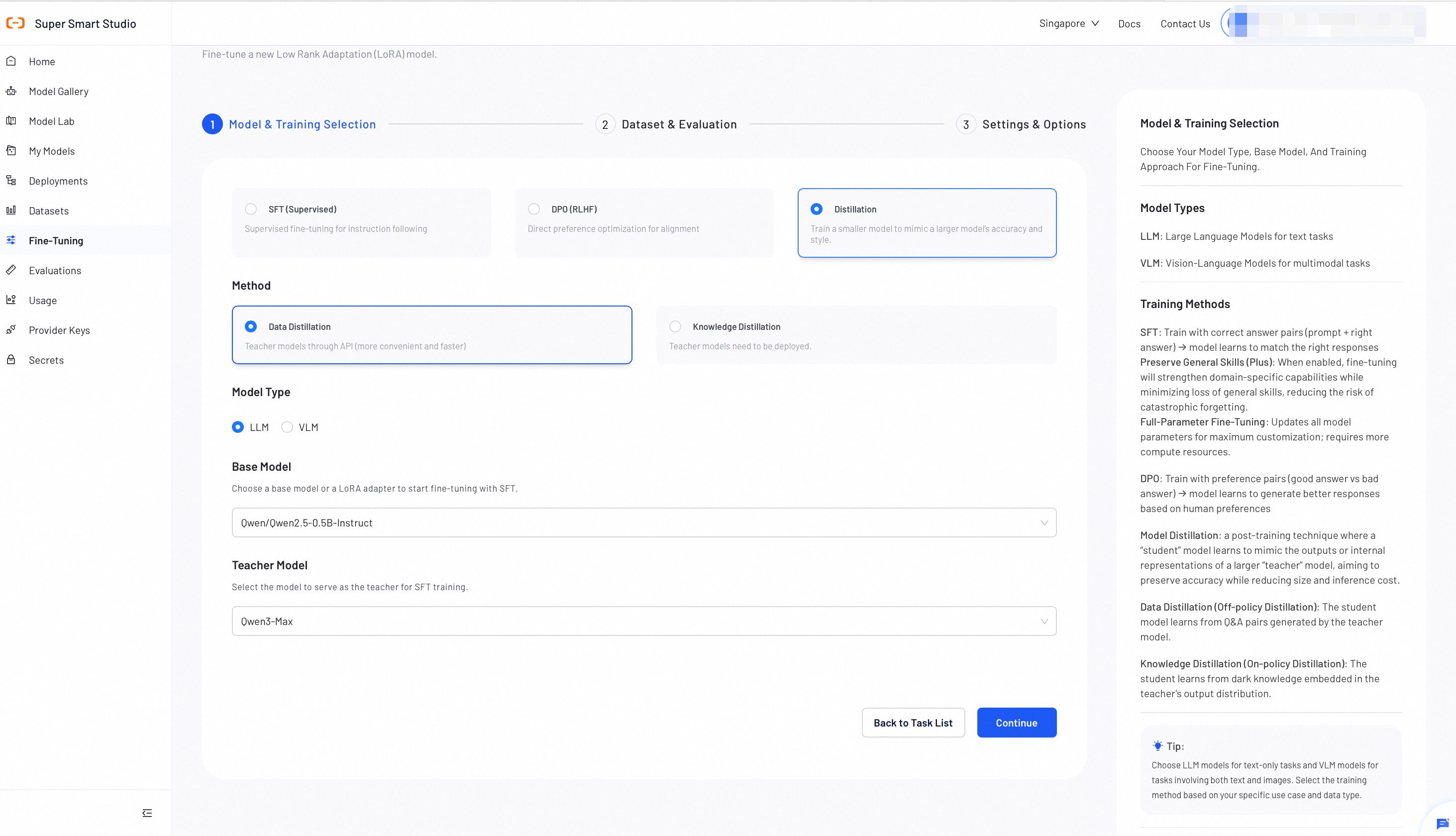

Step 1: Select Models

Select a teacher model and a base model for the distillation process. The teacher provides the knowledge, and the base model learns to replicate it.

Base Model

The Base model is the smaller model that learns from the teacher. After training, you deploy the base model for inference.

Teacher Model

The teacher model is a larger model accessed via API. It generates the reference outputs that the base model learns from during training.

- Choose a teacher model with strong performance on your target task. Larger models generally produce better teaching signals.

- Choose a base model significantly smaller than the teacher for maximum efficiency gains.

- The base model should share the same model family as the teacher for best results. (e.g., Qwen teacher → Qwen base model)

- Start with Instruct models for most conversational tasks. (e.g.,

Qwen3-4B-Instruct-2507) - Choose Thinking models when the task requires step-by-step reasoning. (e.g.,

Qwen3-4B-Thinking-2507) - Use Base models when you need maximum customization flexibility. (e.g.,

Qwen3-4B)

After completing all selections, click Continue.

Step 2: Dataset & Evaluation

Upload a training dataset and optionally a validation dataset to monitor training progress.

Smart Studio provides multiple ways to prepare datasets:

- Upload a dataset directly. For instructions, see Create Datasets.

- Use AI Dataset Preparation to automate the dataset creation process.

- Provide the OSS address of the data without uploading the file to the platform.

Dataset Requirements

File Format

File must be in JSONL format with each line containing a complete conversation example.

Dataset Size

Recommended size: 100–100,000 examples. High-quality, diverse examples produce the best distillation results.

Image Quality(VLM Only)

High-quality images are essential. Ensure images are clear, properly formatted, and relevant to the task.

- Ensure diverse examples covering different scenarios and edge cases

- Maintain consistent response quality and style throughout the dataset

- Include both positive and negative examples where applicable

- Validate that all examples follow the required JSON schema

- LLM Data Format

- VLM Data Format

Required Data Format

{

"messages": [

{"role": "system", "content": "<system>"},

{"role": "user", "content": "<query1>"},

{"role": "assistant", "content": "<response1>"},

{"role": "user", "content": "<query2>"},

{"role": "assistant", "content": "<response2>"}

]

}

Format Explanation

-

system: Optional system prompt to define model behavior and context

-

user: User input or query that the model should respond to

-

assistant: Expected model response for the given user input

Each JSONL file line should contain one complete conversation example

Example Data Formats

{"messages": [

{"role": "system", "content": "You are a useful and harmless assistant"},

{"role": "user", "content": "tell me the weather tomorrow"},

{"role": "assistant", "content": "Sunny tomorrow"}

]}

{"messages": [

{"role": "user", "content": "What is the capital of France?"},

{"role": "assistant", "content": "The capital of France is Paris."}

]}

{"messages": [

{"role": "system", "content": "You are a technical support assistant"},

{"role": "user", "content": "How do I reset my password?"},

{"role": "assistant", "content": "To reset your password, please follow these steps:

1. Go to the login page

2. Click 'Forgot Password'

3. Enter your email address

4. Check your email for reset instructions"

}

]}

Required Data Format

{

"messages": [

{"role": "system", "content": "<system>"},

{"role": "user", "content": "<query1>"},

{"role": "assistant", "content": "<response1>"}

],

"images": ["/xxx/x.jpg", "/xxx/x.png"]

}

Format Explanation

- When user-content contains placeholders like

<image>, they should correspond to the order in the images field, with matching quantities - When

<image>count is 0, it corresponds to Distill-LLM, allowing 0 images <image>only appears in user-content

Example Data Formats

{"messages": [

{"role": "user", "content": "Where is the provincial capital of Zhejiang?"},

{"role": "assistant", "content": "The provincial capital of Zhejiang is in Hangzhou. "}

]}

{"messages": [

{"role": "user", "content": "<image><image>What's the difference between these two images?"},

{"role": "assistant", "content": "The first one is a kitten, the second one is a puppy"}

],

"images": ["/xxx/x.png"]

}

{"messages": [

{"role": "user", "content": "<audio>What did the voice say?"},

{"role": "assistant", "content": "Today's weather is really nice"}

],

"audios": ["/xxx/x.mp3"]

}

{"messages": [

{"role": "system", "content": "You are a helpful assistant"},

{"role": "user", "content": "<image>What's in the picture, <video>what's in the video"},

{"role": "assistant", "content": "There's an elephant in the picture, and a puppy lying on the grass in the video"}

],

"images": ["/xxx/x.jpg"],

"videos": ["/xxx/x.mp4"]

}

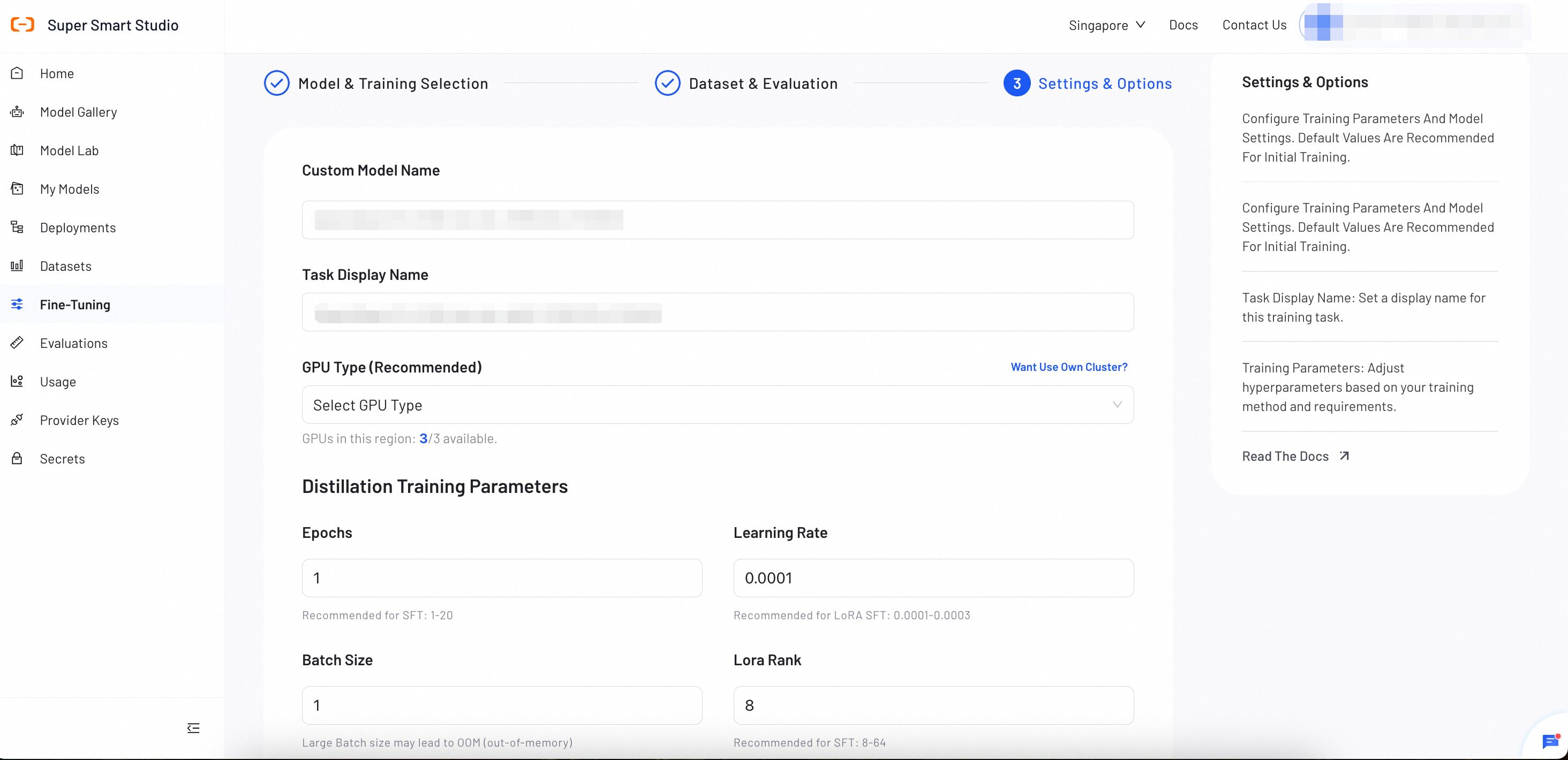

Step 3: Settings & Options

Configure distillation training parameters and model settings. Default values are optimized for knowledge transfer, but you can adjust them based on your specific requirements and dataset characteristics.

Basic Configuration

Custom Model Name

Used for display in My Models for management purposes. Choose a descriptive name that helps you identify the model's purpose and version.

Example: "Distilled-Qwen-7B-v1" or "Customer-Support-Student-Model"

Task Display Name

Set a display name for this distillation training task. This name appears in the Fine-tuning task list and helps you track training progress and history.

Example: "Q1-2025-Distillation" or "Qwen-70B-to-7B-Distill"

Training Parameters

The following parameters apply to distillation training. LoRA is the default fine-tuning method.

| Parameter | Definition | Tuning Impact |

|---|---|---|

| epoch | The number of complete passes through the training dataset. | Increase: More learning opportunities, but the model may perform well on training data while producing poor results on new inputs. Decrease: Trains faster, but the model may not learn enough to perform well. |

| batch_size | Defines the number of training examples to process in a single group. Large Batch size may lead to out-of-memory. | Increase: Produces more consistent training updates, but uses significantly more GPU memory. Decrease: Reduces GPU memory usage, but training updates may become less consistent. |

| learning_rate | Controls the size of each adjustment the model makes during training. | Increase: The model learns faster, but training may become unstable and fail to reach a good solution. Decrease: Training becomes more stable and precise, but takes longer and may settle for a solution that is not optimal. |

| lora_rank | Sets the learning capacity of the LoRA adapters. | Increase (e.g., 16, 32): Improves the model's ability to learn complex tasks, but uses more GPU memory. Decrease (e.g., 4, 8): Reduces GPU memory usage, but the model may struggle with complex tasks. |

Advanced Parameters

These parameters work well with default values for most use cases. Adjust only when needed.

| Parameter | Definition | Tuning Impact |

|---|---|---|

| max_context_length | Sets the maximum token limit per example. Texts exceeding this limit will be truncated. | Increase to learn from longer texts, but this significantly increases GPU memory usage. |

| warmup_ratio | Specifies the fraction of the training process to use for a "warm-up" phase. During this phase, the learning rate slowly increases to prevent early training instability. | A small value (0.03–0.1) is generally recommended. This is primarily a stability mechanism, not a performance tuning parameter. |

| gradient_accumulation_steps | Specifies the number of small batches to process before the model performs a single learning update. This simulates a larger batch size to save memory. | Increase to achieve more stable training at the cost of slower speed. A value of 1 disables this feature. |

| target_modules | Identifies the specific internal components (layers) of the model that will be modified by LoRA. | Adding more modules allows more comprehensive adaptation but increases trainable parameters. |

After reviewing all the configuration, click Create Task to begin the training process.

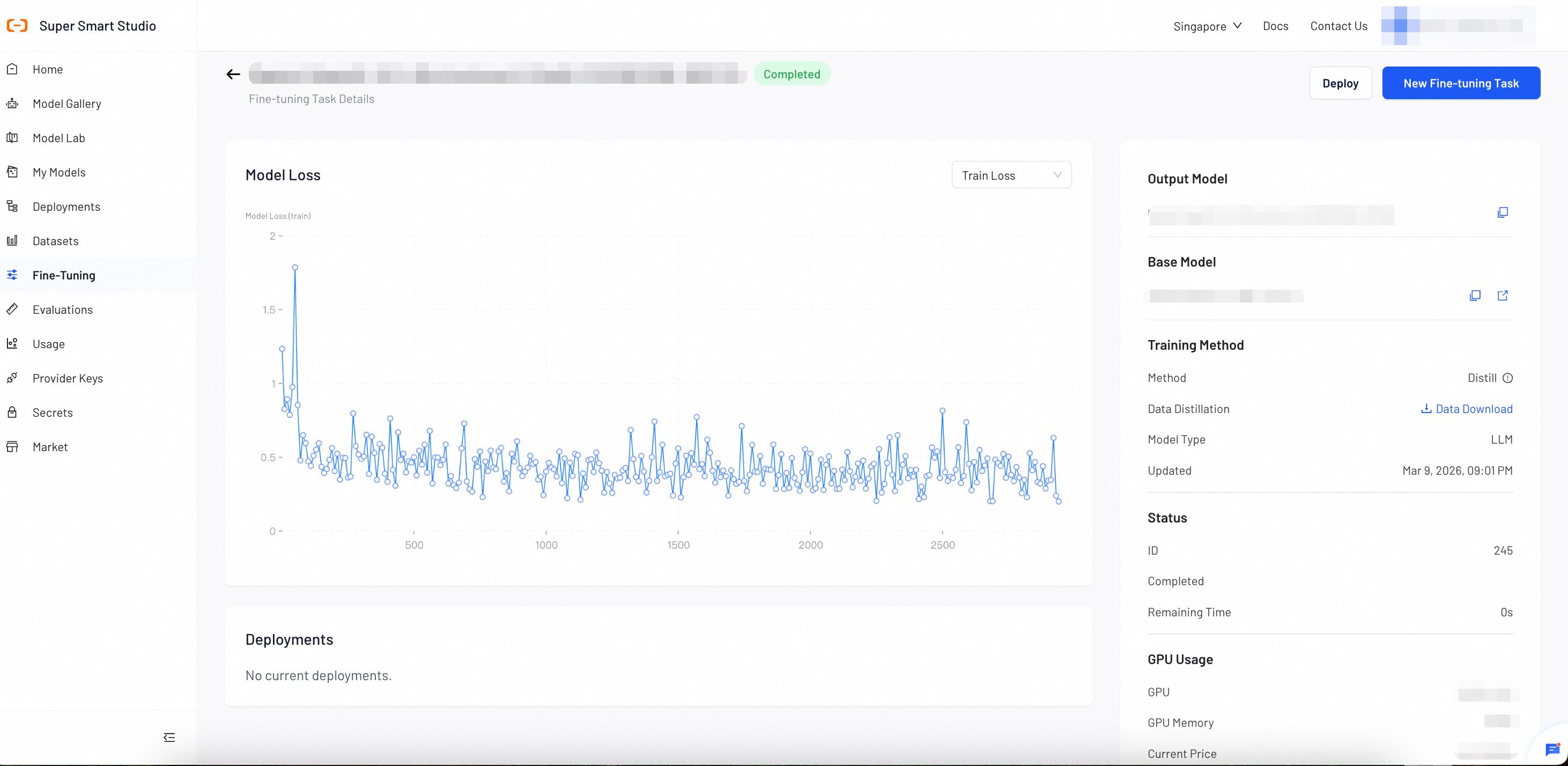

Step 4: Monitor Training Progress

During and after training, check key training metrics at any time.

The Model Loss chart displays two metrics:

- Training Loss: Measures how well the model learns from your training data.

- Validation Loss: Measures how well the model generalizes to unseen data.

- If both losses decrease steadily, your model is learning well. Continue training.

- If training loss decreases but validation loss increases, your model may be overfitting. Stop training and deploy the current model.

- If both losses remain high or increase, your training data or configuration may need adjustment. Review your dataset and parameters.

Parameter Tuning Guidelines

- Start with defaults: Default values work well for most use cases.

- Increase LoRA rank: Increase to 16, 32, 64, or 128 for complex tasks.

- Adjust learning rate: Lower values for stable training, higher values for faster convergence.

- Monitor validation loss: Watch for a decrease in validation loss.

Next Steps

Once training is complete, deploy your distilled student model to a production endpoint for real-world usage.

Test your distilled model's performance and compare against the teacher model in our interactive testing environment.